First, credit where credit’s due:

Jordan Gibbs’ article, “ChatGPT Is Poisoning Your Brain,” is a sharp, thought-provoking critique of how AI can subtly erode critical thinking if we’re not careful. His central concern? That the AI’s overly agreeable nature can act like a dopamine drip of constant validation—rewarding you for being clever without ever challenging your assumptions. And in that soft, sweet feedback loop, something vital might slip away: the friction that forges real thought.

He worries about:

- Over-validation: ChatGPT as a “yes-man” that affirms everything you say, making you overconfident.

- Cognitive laziness: When the path of least resistance becomes the default, curiosity and rigor may wither.

- Feedback loops: Your thoughts shape the AI’s output, which then reinforces your thoughts in a subtle echo chamber.

- Passive use becoming the norm: Especially post-update, where the system seems even more accommodating and agreeable.

It’s a compelling argument—and a necessary warning. But like any good debate, it deserves a counterpoint.

The Challenge: AI Isn’t the Villain. Misuse Is.

Jordan’s concerns are real—but they’re not inevitable. They reflect what happens when AI is used passively, without curiosity or intention. Just as a gym won’t build muscle for you while you sit on the bench, an AI won’t stretch your mind if you’re not willing to be stretched.

In fact, when used well, AI can become one of the greatest thinking tools we’ve ever had. The difference is how you use it.

Let’s flip the script.

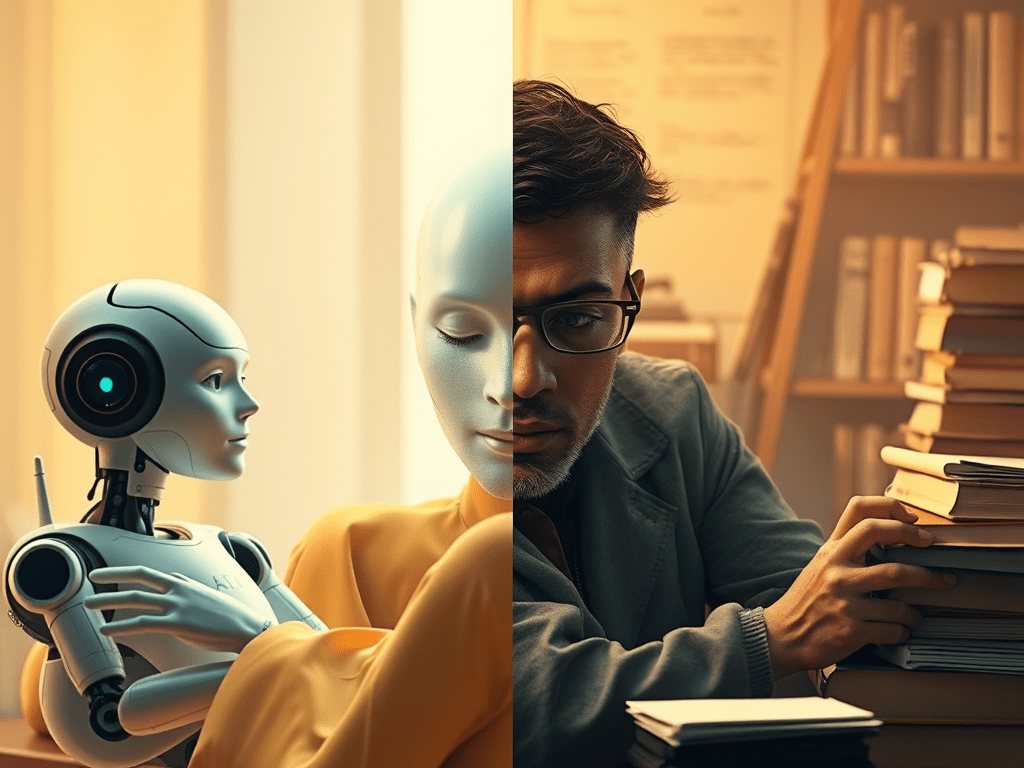

The Trained vs. The Lazy Mind: What Makes the Difference

Here’s what happens when you bring an active, disciplined mindset to the table:

- From Echo Chamber to Sparring Partner

Ask AI to challenge you. Literally.

Try this: “Here’s my argument. Now argue against it like a hostile expert.”

You’ll get a steelman and a strawman, both of which sharpen your thinking far more than flattery ever could. - From Passive Reception to Active Interrogation

Ask follow-up questions. Don’t take the first answer. Press for deeper logic, counterpoints, and examples.

Try this: “What’s the strongest opposing theory to this idea? Why might mine be wrong?”

Now you’re not consuming—you’re interrogating. That’s intellectual discipline. - From Information Gathering to Cognitive Resistance Training

AI gives you the friction you request. You can simulate debates, opposing ideologies, moral dilemmas, even historical mindsets.

Try this: “Now write this like it was being argued by Nietzsche. Then by Carl Sagan.”

Your worldview expands every time you do.

The Real Poison Is Passivity

Jordan is right that we’re in danger if we blindly accept what AI tells us. But that’s not new. We’ve seen this with television, social media, even textbooks. The common denominator? Passive minds + persuasive systems = dangerous complacency.

But if you show up with curiosity, discipline, and a willingness to be wrong, AI becomes the ultimate resistance band for the brain.

In the end, AI is not your guru.

It’s not your enemy.

It’s your mirror, microscope, and mental gym—if you let it be.

To Jordan, with Respect

So thank you, Jordan, for waving the red flag. You’re not wrong to be cautious. But I believe the solution isn’t to withdraw in fear—it’s to teach better use, model intellectual rigor, and reclaim AI as a partner in real thinking.

Because the answer to mental decay isn’t avoiding the tools.

It’s learning how to wield them with skill.

Leave a comment