Facial recognition tech is back in the dock, accused of harbouring racial bias—and this time the charge sheet includes the scientists who built it. After comments made on Good Morning Britain suggesting that bias in facial recognition cameras reflects the bias of their creators, the debate has gone from technical to combustible in record time.

So now we’re left asking: is the camera racist, the coder biased, the dataset flawed—or are we just allergic to nuance in 2026? 🤷♂️🔥

🎥 The Camera, The Coder, and The Convenient Villain

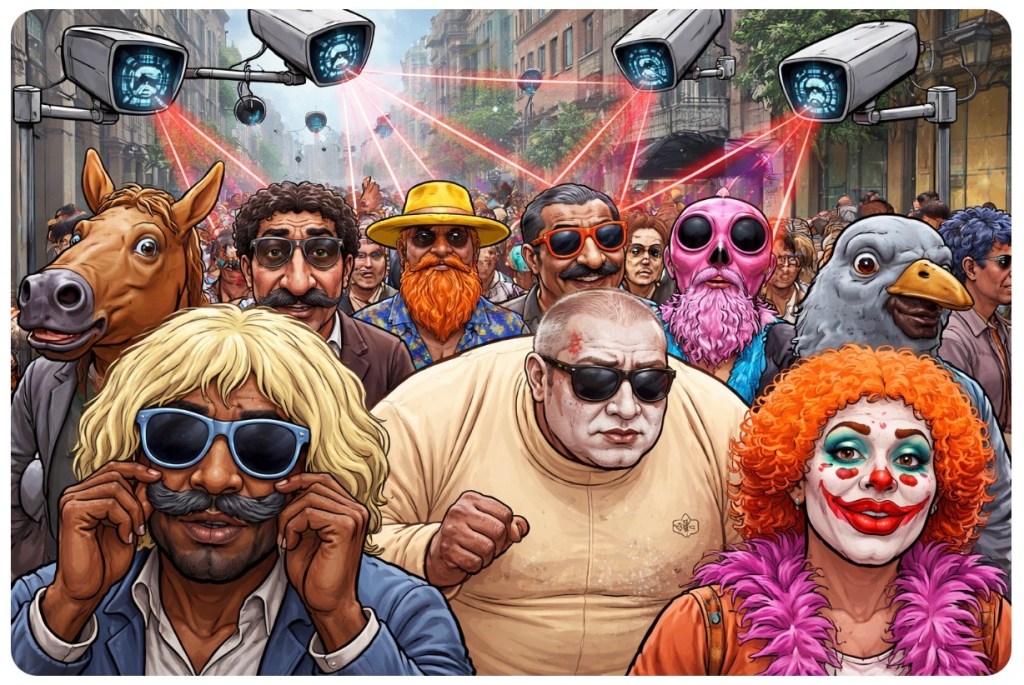

Let’s untangle this circus.

Facial recognition systems are trained on data. Lots of it. Mountains of it. If that data underrepresents certain groups, the system performs worse on them. That’s not ideology—that’s statistics. But in the age of outrage, statistical imbalance quickly morphs into moral indictment.

So when someone says, “It’s the bias of the person who created it,” the implication is explosive: coders—many of whom are from diverse backgrounds themselves—are somehow hard-wiring prejudice into lines of Python like Bond villains stroking a cat. 🐱💻

Is bias possible? Of course. Humans build systems. Humans are imperfect.

Is it as simple as “the team must secretly agree with the police”? That’s a Netflix thriller plot, not a peer-reviewed conclusion.

The real issue is far less cinematic and far more mundane:

- Who collected the training data?

- How representative was it?

- Who tested the system—and how rigorously?

- What incentives pushed it to market before it was fully stress-tested?

But subtlety doesn’t trend. “Complex systemic data issue” doesn’t spark viral fury. “Racist camera” does. 📈

And here’s the twist: blaming scientists wholesale—especially without evidence—risks flattening a complex technological challenge into a morality play. That helps no one. Not communities worried about misidentification. Not police forces trying to modernise. Not developers trying to fix real flaws.

We don’t solve bias by pointing fingers. We solve it by auditing data, improving oversight, diversifying test sets, and holding institutions accountable with evidence—not insinuation.

Because once we start assigning intent without proof, the debate stops being about technology… and starts being about theatre. 🎭

🔥 Challenges 🔥

Are we tackling real flaws in tech—or just chasing the loudest headline?

Is calling the camera “racist” a necessary wake-up call… or a lazy shortcut?

And when does criticism become conspiracy?

If you’ve got a take—measured, furious, sarcastic, surgical—we want it. But bring substance, not just smoke. Drop your thoughts in the blog comments (not just on social media). 💬👇

👇 Like it. Share it. Argue with it.

The sharpest insights (and the spiciest but smartest comments) will be featured in the next issue of the magazine. 📝🔥

Leave a comment