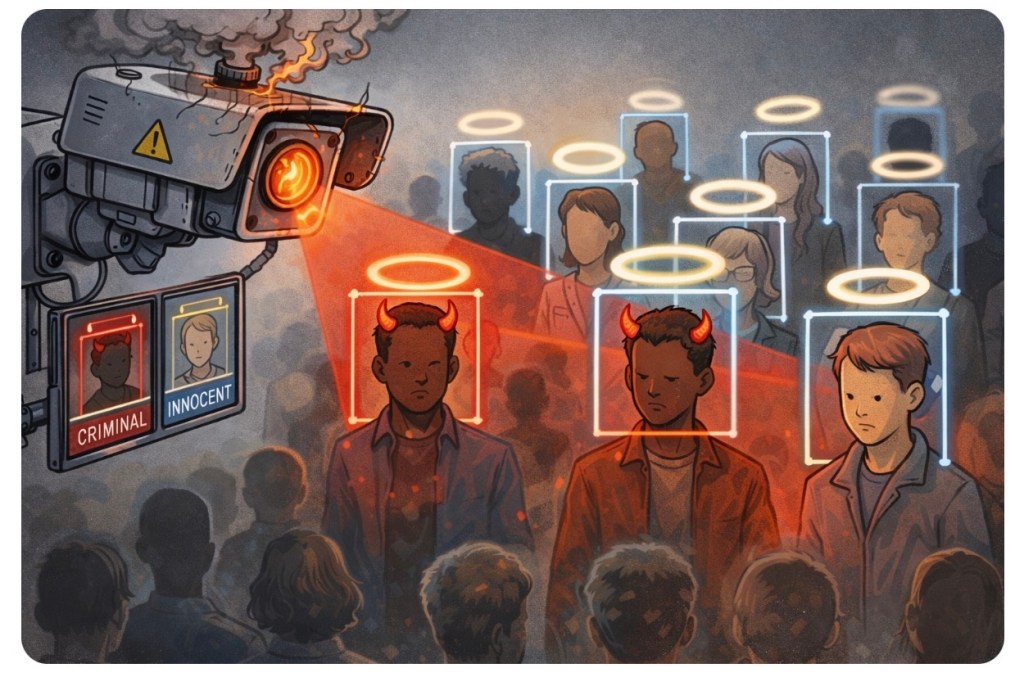

🤖🚓A police force rolls out shiny new facial recognition tech, promises cutting-edge crime fighting… and then quietly shelves it when the results start looking less like justice and more like a glitchy episode of Black Mirror. Turns out, when your algorithm starts disproportionately flagging certain groups, the issue isn’t “who it catches”—it’s how badly it’s built.

🧠 Algorithmic Genius or Digital Guesswork Gone Wild?

Let’s get one thing straight—technology doesn’t magically become objective just because it runs on electricity instead of opinions. Facial recognition systems are only as good as the data they’re trained on, and spoiler alert: a lot of that data has historically been about as balanced as a three-legged chair.

So when a system starts disproportionately identifying Black individuals, the conclusion isn’t “wow, the robot cracked the code on crime.” It’s “congratulations, you’ve automated bias at scale.” 🎯

Because here’s the uncomfortable truth: crime rates are shaped by complex social, economic, and systemic factors—not skin colour. Pretending otherwise isn’t analysis, it’s lazy stereotyping dressed up as “common sense.”

And the real kicker? These systems have been repeatedly shown to have higher error rates for people with darker skin tones. Meaning the tech isn’t just biased—it’s often flat-out wrong. Imagine being flagged as a suspect because an algorithm squinted at your face and said, “Eh, close enough.” 🙃

So yes, pulling the plug on flawed tech isn’t “giving up on policing”—it’s avoiding turning law enforcement into a dystopian guessing game.

🔥 Challenges 🔥

Are we rushing headfirst into a future where dodgy algorithms make life-altering decisions—or are we finally waking up to the risks? ⚖️

How much trust should we really place in systems we barely understand… especially when they get it wrong?

Drop your take directly in the blog comments—sharp, skeptical, or spicy. 💬🔥

👇 Hit comment, like, and share if you think “AI justice” should actually involve… well, justice.

The best takes will be featured in the next magazine issue. 🎯📝

Leave a comment