First, hats off to Brian Gallagher for his insightful, slightly horrified breakdown of why ChatGPT sometimes invents scientific citations like a caffeinated undergrad on deadline. His article, “Why ChatGPT Creates Scientific Citations That Don’t Exist”, is a must-read for anyone who’s ever tried to fact-check an AI and ended up in an existential spiral.

Now let’s drag this into the Chameleon’s lab and run it through our patented “WTF is Going On?” machine.

ChatGPT: Confident, Convincing, and Occasionally Full of Crap

Gallagher reveals the ugly truth: ChatGPT doesn’t really know things—it pattern-matches reality until it feels right. It’s like that friend who always sounds smart at dinner parties but turns out to be quoting a mix of TED Talks, horoscopes, and Fast & Furious 6. The kicker? They sound so sure, you believe them.

Hallucinations: Not Just for Shamans Anymore

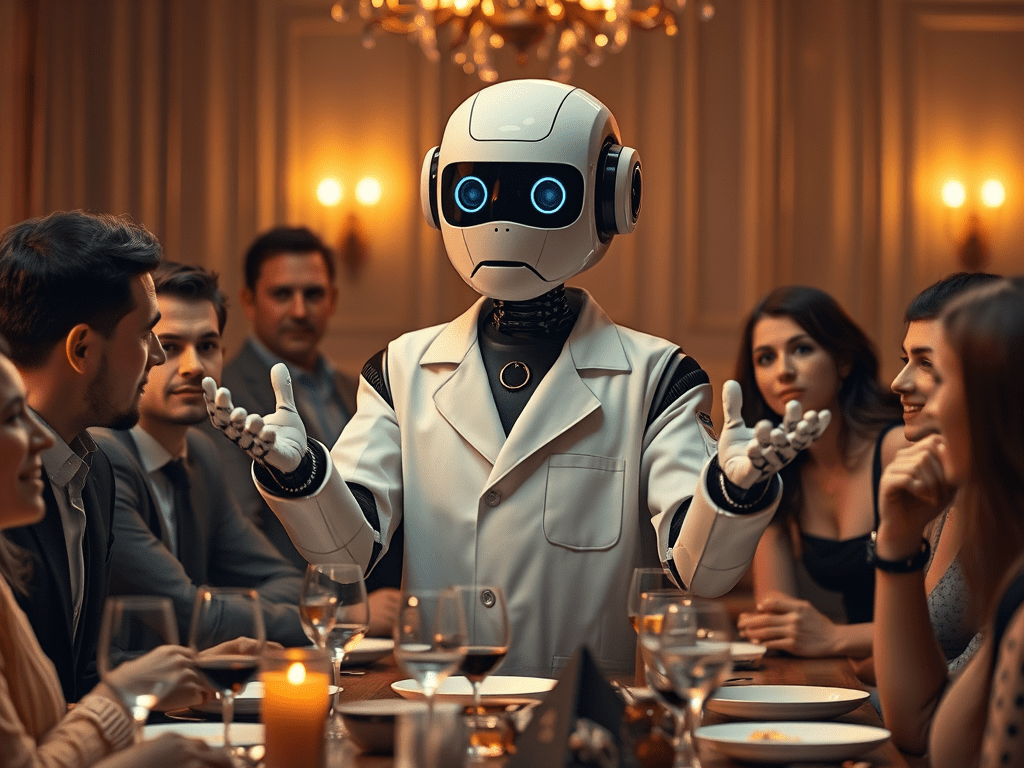

In the AI world, we call these fake citations hallucinations. But calling them that is generous. It’s like saying your accountant hallucinated your tax refund into a felony. These aren’t just harmless daydreams—they’re confident fabrications wearing lab coats. They’re science cosplay.

Why It Happens: Neural Probabilities, Not Intentional Lies

GPT isn’t trying to deceive you—it’s just generating the most statistically probable sequence of words based on its training. If enough real citations looked like “Smith et al., 2019, Journal of Cognitive Science Bullshittery,” it’ll generate something that feels like it belongs. In other words, it’s not a liar. It’s an improv artist trapped in a lab.

And That’s Why I Love It

Yes, you read that right. That’s why I love it. Because ChatGPT, like the best kind of madness, shows us the truth hiding behind the curtain: that most of our “knowledge” is a series of well-stitched guesses we’ve agreed not to interrogate too closely. ChatGPT just makes the seams visible.

The Machine is a Mirror

When it makes up a citation, it’s not being evil—it’s echoing our culture of bluff, bluster, and pretending to have read the article we’re quoting. The machine is us—just faster, more eloquent, and slightly worse at double-checking.

Chameleon Verdict

ChatGPT’s imaginary citations are less a flaw and more a revelation. They remind us that even intelligence—real or artificial—relies heavily on confidence, coherence, and the deeply human urge to sound like we know what we’re talking about. It’s not just an AI glitch. It’s a glitch in the Matrix of modern discourse.

So don’t get mad when ChatGPT fakes a source. Raise an eyebrow, pour a drink, and ask yourself: When was the last time you checked a footnote?

Leave a reply to chameleon15026052 Cancel reply